G-YNTHETIC LABS // INTELLECTUAL PROPERTY

The Axioms

F.R.A.C.C. Framework

The Claim: Linear Chain-of-Thought is inefficient. Intelligence requires Fractal

Recursion.

The Proof: A hierarchical reasoning model that breaks complex strategic goals

into atomic executable actions.

The Trililiquary

The Claim: Context loss is a geometry problem, not a token problem.

The Proof: A "Slipstream Manifold" framework for frictionless state transitions

in high-dimensional vector space.

Spontaneous Association (SAE)

The Claim: LLMs can identify relationships between disparate data nodes via entropy

injection.

The Proof: Observed nascent forms of spontaneous association in "Dream State"

engine cycles.

Building a 4D Gradient

The Claim: Logic without time is hallucination.

The Proof: Methodology for mapping semantic relationships into temporal vector

spaces.

Symbolic Cognition

The Claim: Symbols are the ultimate compression algorithm for neural weights.

The Proof: Frameworks for lossless compression layers in high-dimensional

thought.

Simulating Diverse Minds

The Claim: To simulate a mind, you must simulate its bias.

The Proof: Core thesis on simulating diverse cognitive patterns within a

unified symbolic framework.

Pre-Linguistic Inference

The Claim: Reasoning predates language. LLMs that only "speak" cannot "think".

The Proof: A "Pre-Linguistic Scaffold" that forces AI to ideate before it

tokenizes.

Symbolic Grid Atlas

The Claim: 3D Memory Lattices require a coordinate-based interchange format.

The Proof: The .SGA format for serializing and persisting 343-node cognitive

states.

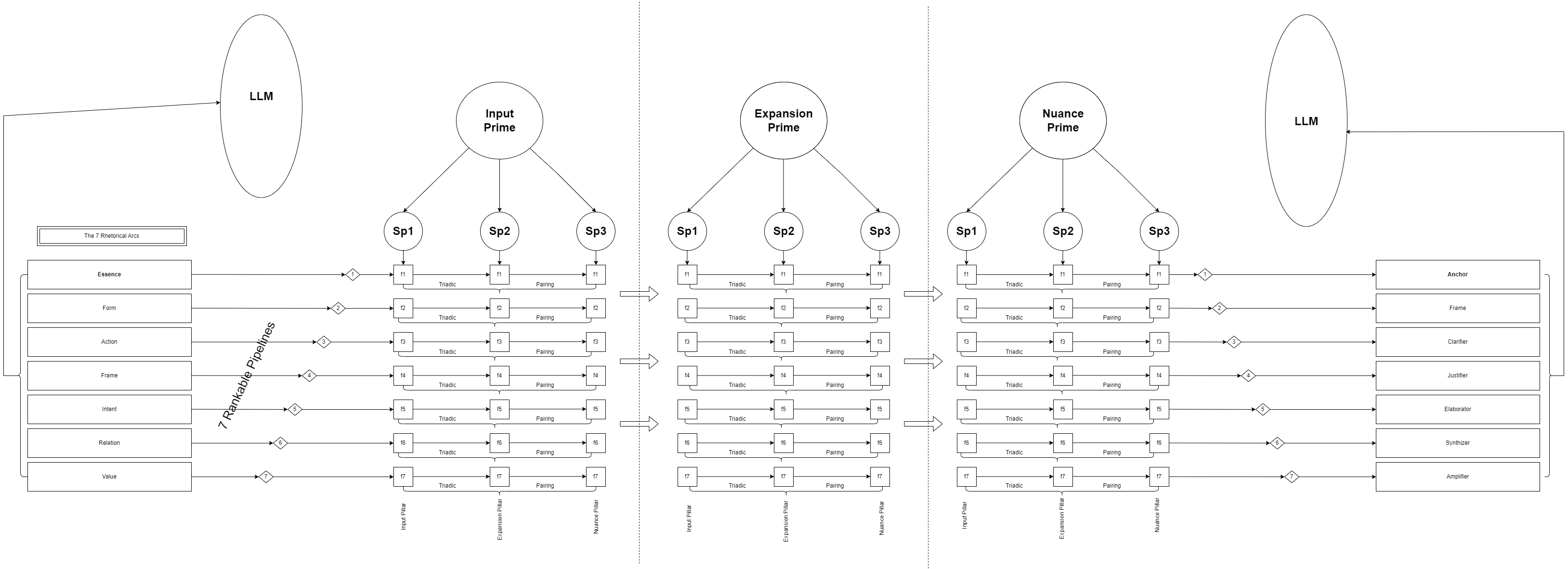

Architecture Part 1

The Claim: Position bias limits context. Hierarchical state propagation recovers it.

The Proof: Specifications for a multi-dimensional holographic memory lattice.

The Oracle Mirage

The Warning: Over-reliance on probabilistic models creates a societal "Mirror Trap".

The Solution: Structural Grounding (The G-ynthetic Engine).